I’ve found that AI has done literally nothing to improve my life in any way and has really just caused endless frustrations. From the enshitification of journalism to ruining pretty much all tech support and customer service, what is the point of this shit?

I work on the Salesforce platform and now I have their dumbass account managers harassing my team to buy into their stupid AI customer service agents. Really, the only AI highlight that I have seen is the guy that made the tool to spam job applications to combat worthless AI job recruiters and HR tools.

I created a funny AI voice recording of Ben Shapiro talking about cat girls.

Then it was all worth it.

ChatGPT is incredibly good at helping you with random programming questions, or just dumping a full ass error text and it telling you exactly what’s wrong.

This afternoon I used ChatGPT to figure out what the error preventing me from updating my ESXi server. I just copy pasted the entire error text which was one entire terminal windows worth of shit, and it knew that there was an issue accessing the zip. It wasn’t smart enough to figure out “hey dumbass give it a full file path not relative” but eventually I got there. Earlier this morning I used it to write a cross apply instead of using multiple sub select statements. It forgot to update the order by, but that was a simple fix. I use it for all sorts of other things we do at work too. ChatGPT won’t replace any programmers, but it will help them be more productive.

It’ll also save the programmers questions from the moderately technically-inclined non-programmers at work! Haha

A lot of papers are showing that the code written by people using ChatGPT have more vulnerabilities and use more obsoleted libraries. Using ChatGPT actively makes you a worse programmer, according to that logic.

Yes. It’s not an expert. It feigns this, but it’s all lies.

Agree to disagree. If you trust this, you’re a fool. Trust me, I’ve tried for hours asking it about a myriad of tech issues, and it just constantly fucking lies.

It can help you, but NEVER trust it. Never. Google everything it tells you if it’s important.

If you blindly trust it then yeah it will cause problems. But if you know what you’re doing, but forget X or Y minor thing here and there, or just need some direction it’s amazing.

Honestly, if that is your impression, I think you’re using it wrong and expecting the wrong results from it.

If AI is for anything it’s for DnD campaign art.

Make your NPCs and towns and monsters!

Or helping to come up with some plot hooks in a pinch.

Same. When I’ve got a session coming upjwithjless than ideal prep time, I’ve used chat get to help figure out some story beats. Or reframe a movie plot into DnD terms. But more often than not I use the Story Engine Deck to help with writers block. I’d rather support a small company with a useful product than help Sam Altman boil the oceans.

Lol best me to it. For a lot of generic art, even more customized stuff, it works well.

It’s also pretty great at giving stars to home brew monsters, or making variations of regular monsters.

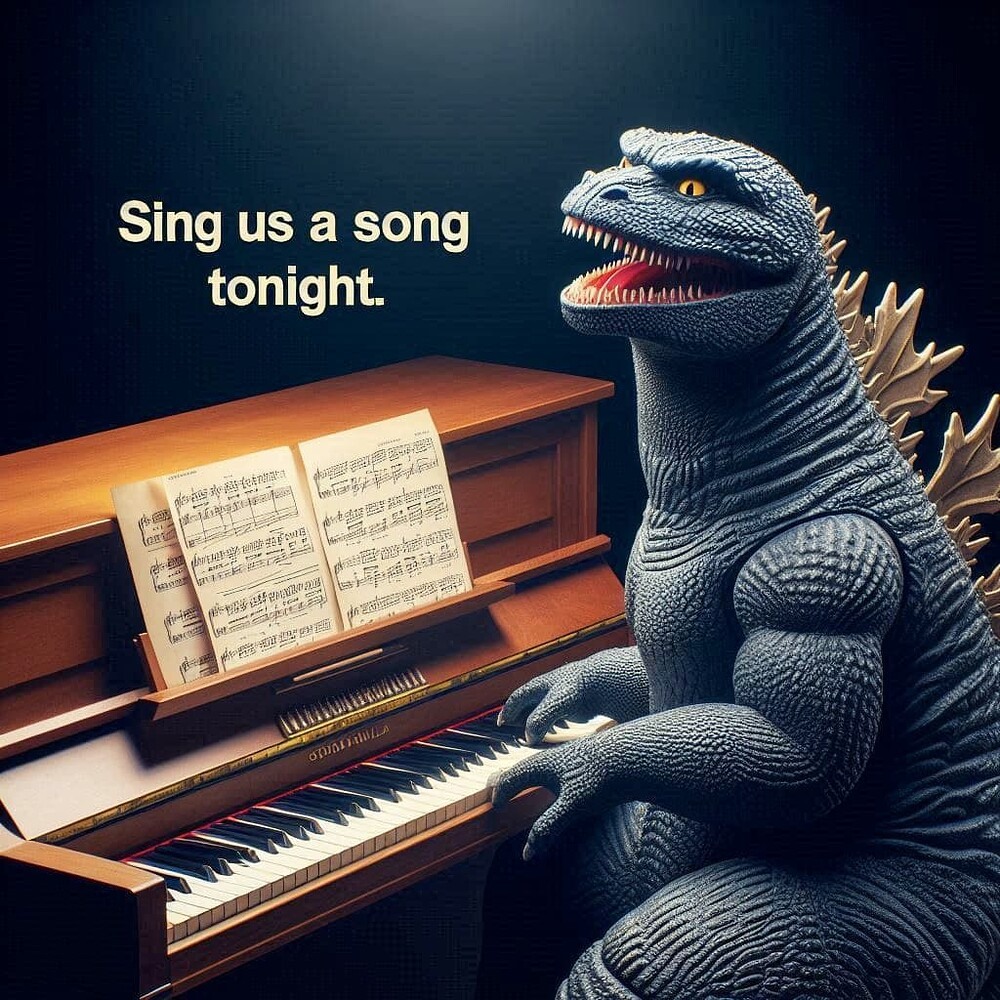

I agree, I don’t really use it but I do like some of the memes that came out of it, case in point:

Ah fuck I thought that photo was real.

deleted by creator

I thought it was pretty fun to play around with making limericks and rap battles with friends, but I haven’t found a particularly usefull use case for LLMs.

I like asking ChatGPT for movie recommendations. Sometimes it makes some shit up but it usually comes through, I’ve already watched a few flicks I really like that I never would’ve heard of otherwise

I use it often for grammar and syntax checking

I tried to give it a fair shake at this, but it didn’t quite cut it for my purposes. I might be pushing it out of its wheelhouse though. My problem is that, while it can rhyme more or less adequately, it seems to have trouble with meter, and when I do this kind of thing, it revolves around rhyme/meter perfectionism. Of course, if I were trying to actually get something done with it instead of just seeing if it’ll come up with something accidentally cool, it would be reasonable to take what it manages to do and refine it. I do understand to some extent how LLMs work, in terms of what tokens are and why this means it can’t play Wordle, etc., and I can imagine this also has something to do with why it’s bad at tightly lining up syllable counts and stress patterns.

That said, I’ve had LLMs come up with some pretty dank shit when given the chance: https://vgy.me/album/EJ3yPvM0

Most of it is either the LLMs shitting themselves or GPT doing that masturbatory optimism thing. Da Vinci’s “Suspicious mind…” in the second image is a little bit heavyish though. And those last two (“Gangsterland” and “My name is B-Rabbit, I’m down with M.C.s, and I’m on the microphone spittin’ hot shit”) are god damn funny.

Chat GPT enabled me to automate a small portion of my former job. So that was nice.

Personally I use it when I can’t easily find an answer online. I still keep some skepticism about the answers given until I find other sources to corroborate, but in a pinch it works well.

because of the way it’s trained on internet data, large models like ChatGPT can actually work pretty well as a sort of first-line search engine. My girlfriend uses it like that all the time especially for obscure stuff in one of her legal classes, it can bring up the right details to point you towards googling the correct document rather than muddling through really shitty library case page searches.

especially when you use something with inline citations like bing

It tends to make Lemmy people mad for some reason, but I find GitHub copilot to be helpful.

Burn his witch, boys!

Leave the poor witch alone, he wrote it himself.

But he turned me into a newt!

Yes:

- Demystifying obscure or non-existent documentation

- Basic error checking my configs/code: input error, ask what the cause is, double check it’s work. In hour 6 of late night homelab fixing this can save my life

- I use it to create concepts of art I later commission. Most recently I used it to concept an entirely new avatar and I’m having a pro make it in their style for pay

- DnD/Cyberpunk character art generation, this person does not exist website basically

- duplicate checking / spot-the-diffetences, like pastebins “differences” feature because the MMO I play released prelim as well as full patch notes and I like to read the differences

I got high and put in prompts to see what insane videos it would make. That was fun. I even made some YouTube videos from it. I also saw some cool & spooky short videos that are basically “liminal” since it’s such an inhuman construction.

But generally, no. It’s making the internet worse. And as a customer I definitely never want to deal with an AI instead of a human.

100%. I don’t need help finding what’s on your website. I can find that myself. If I’m contacting customer support it’s because my problem needs another brain on it, from the inside. Someone who can think and take action to help me. Might require creativity or flexibility. AI has never helped me solve anything.

Oh I hate those chat bots which just display a list of articles matching keywords in your question.

I think people were already making the internet worse. AI just helps them make it worse faster.

I mean, yeah, but that difference is quite crucial.

People have always wanted to be the top search result without putting effort in, because that brings in ad money.

But without putting effort in, their articles were generally short, had typoes, and there were relatively few such articles.Now, LLMs allow these same people to pump out hundredfold as much gargage, consisting of lengthy articles in many languages. And because LLMs are specifically trained to produce texts that are human-like, it’s difficult for search engines to filter out these bad quality results.

Ive found its made doing end-runs around enshitification easier.

For example Trying to find a front suspension top for a peugeot 206 gti with google means being recommended everything front suspension for the peugeot 207, 208, Vw Gti, Swift Gti… not to mention the websites “Best price on insert what you searched for here” only they sell nothing.

So I ask chat gpt for the part number and search that.

This is exactly the kind of thing that LLMs are good for. I also use them to get quick and concise answers about programming frameworks, instead of trying to triangulate the answer from various anecdotes on stackoverflow, or reading two hours of documentation.

But I figured this kind of thing doesn’t count as “slop.” OP was talking about the incoherent trash hallucinations, so I left that one out.

AI is used extensively in science to sift through gigantic data sets. Mechanical turk programs like Galaxy Zoo are used to train the algorithm. And scientists can use it to look at everything in more detail.

Apart from that AI is just plain fun to play around with. And with the rapid advancements it will probably keep getting more fun.

Personally I hope to one day have an easy and quick way to sort all the images I have taken over the years. I probably only need a GPU in my server for that one.

anyone who uses machine learning like that would probably take issue with it being called AI too

Meh, language evolves. Can’t fight it, might as well join them.

In the sense that a forum I am on has had a huge amount of fun doing very silly things with Godzilla, yes.

https://forums.mst3k.com/t/dall-e-fun-with-an-ai/24697/8237

It’s best to start at the bottom. We didn’t start out with Godzilla when the thread began and it also began in 2022.

8220 posts, the majority Godzilla-related. I haven’t done too many lately, but here’s a few recent ones:

Tits, on an egg-laying reptile?

I’m not completely sure this is a real photo

How dare you mock a widow in mourning!

I use perplexity.ai more than google now. I still don’t love it and it’s more of a testament to how far google has fallen than the usefulness of AI, but I do find myself using it to get a start on basic searches. It is, dare I say, good at calorie counting and language learning things. Helps calculate calorie to gram ratios and the math is usually correct. It also helps me with German, since it’s good at finding patterns and how German people typically say what I am trying to say, instead of just running it through a translator which may or not have the correct context.

I do miss the days where I could ask AI to talk like Obama while he’s taking a shit during an earthquake. ChatGPT would let you go off the rails when it first came out. That was a lot of fun and I laughed pretty hard at the stupid scenarios I could come up with. I’m probably the reason the guardrails got added.

i switched to kagi a year ago as i usually need to go through search result. i was astonished at just how dogpoop google search is compared to it.

youtube was even worse, i had to go through 10 unrelated videos to find one slightly relevant one. kagi is usually dont have the latest results but is on point on relevancy.

I looked at kagi but I don’t want to pay for it because I’m a cheap bastard.

Searx uses different search engines to get the best results. There are many public instances or if you can self host, you can run it privately.

yeah i have wanted to try it. will likely do once the kagi subscription is near the renewal.

haha, no shame in that, i was myself hesitant but one day just gave in anger after getting just ad infested garbage from google for work related topic

youtube was even worse, i had to go through 10 unrelated videos to find one slightly relevant one.

Last month I typed letter for letter the title of a video I saw on there in YouTube search and it tried so hard to push some other barely related videos, I couldn’t believe it. I ended up typing the url manually like some internet cave man

wow, i mean its a free service but the amount of money they make from selling our data they should atleast try to not make us severely hate their product

The things that make us hate it is how they make so much money.

IMO YouTube and social media are both things that would be better as public services than for profit ventures. The things they need to do to make money either make the product shitty (holy shit @ some of the things I’ve heard from people who don’t block ads) or are outright bad for society (misinformation and all).

not happening. bigtech will kill any such attempt by throwing a few millions at senators. these products make close to 100 billions in profit a year. aipac just showed us how wretched our political system is when they get to do a genocide with our money and then get standing ovation from us, and and all that with a lobbying budget of just 300 million

Yeah, this current system looks pretty fucking captured to me.

Some things look like signs that things might not be that bad, like the Google ruling is a step in the right direction. But on the other hand, IMO it wasn’t enough of a step and there was a ruling against MS 20 years ago that looked really good until it was just dropped entirely (though apparently the experience did still affect Gates when he was embarrassed about having to explain his position and realizing that most people didn’t agree with it).

Today’s billionaires don’t seem to have that humility anymore, at least not the more prominent ones. Just like the right wing politicians. And all of it enabled by the billionaire-owned media.

well said, totally agree. the depressing thing is that i don’t see this changing in anytime soon or way ahead in future. with ai powered drones working class will have no means to challenge the oligarchy unless they end up fighting and killing each other.

I just tried it and was pleasently surprised.

It also helps me with German, since it’s good at finding patterns and how German people typically say

Depending on your first language I can offer you my assistance as a native german :)

If you want to, pm me or send a message to my email: lemmy@relay2moritz.mozmail.comI am moving to Germany next year. Even though I was born there and my mother taught me some, and I learned it in high school, and I also studied in college in the USA, I cannot speak it worth shit. I’m hoping I pick up some more when I move, but if not maybe my kids can teach me.

I find that I’m just trying to pick up things through osmosis. I watch German youtubers and try to watch a German movie or two every now and then. And then sometimes when I’m talking I try to directly translate what I’m saying in my head, and assuming I know the words, I usually fuck up the order, article, or tense.

I say all that to say that my current workflow is already overwhelming and I’m on a bit of a time crunch. I do really need to surround myself with native speakers and listen to them more. I will reach out. Thanks!

If you want more recommendation for german yt content, what are some general interests if you?

For example comedic story telling, memes, game/talk shows, technical etc.?Edit:

And then sometimes when I’m talking I try to directly translate what I’m saying in my head, and assuming I know the words, I usually fuck up the order, article, or tense.

We have two foreign colleagues at work. One being from Syria and one from Russia. Both at the time of starting broke relatively broken German but thwy improved soo much since I met them. One of them did go to a german language school. And even after 6 years some errors are made. Nothing to worry about if you can get the idea across.

I use silly tavern for character conversations, pretty fun. I have SD forge for Pomy diffusion, and use Suno and Udio. Almost all of that goes to DND, the rest for personal recreation. Google and openai all fail to meet my use cases and if I cuss they get mad so fuck em. I never use those for making money or any other personal progression, that would be wrong.

Garbage in; garbage out. Using AI tools is a skillset. I’ve had great use with LLMs and generative AI both, you just have to use the tools to their strengths.

LLMs are language models. People run into issues when they try to use them for things not language related. Conversely, it’s wonderful for other tasks. I use it to tone check things I’m unsure about. Or feed it ideas and let it run with them in ways I don’t think to. It doesn’t come up with too much groundbreaking or new on its own, but I think of it as kinda a “shuffle” button, taking what I have already largely put together, and messing around with it til it becomes something new.

Generative AI isn’t going to make you the next mona Lisa, but it can make some pretty good art. It, once again, requires a human to work with it, though. You can’t just tell it to spit out an image and expect 100% quality, 100% of the time. Instead, it’s useful to get a basic idea of what you want in place, then take it to another proper photo editor, or inpainting, or some other kind of post processing to refine it. I have some degree of aphantasia - I have a hard time forming and holding detailed mental images. This kind of AI approaches art in a way that finally kinda makes sense for my brain, so it’s frustrating seeing it shot down by people who don’t actually understand it.

I think no one likes any new fad that’s shoved down their throats. AI doesn’t belong in everything. We already have a million chocolate chip cookie recipes, and chatgpt doesn’t have taste buds. Stop using this stuff for tasks it wasn’t meant for (unless it’s a novelty “because we could” kind of way) and it becomes a lot more palatable.

This kind of AI approaches art in a way that finally kinda makes sense for my brain, so it’s frustrating seeing it shot down by people who don’t actually understand it. Stop using this stuff for tasks it wasn’t meant for (unless it’s a novelty “because we could” kind of way) and it becomes a lot more palatable.

Preach! I’m surprised to hear it works for people with aphantasia too, and that’s awesome. I personally have a very vivid mind’s eye and I can often already imagine what I want something to look like, but could never put it to paper in a satisfying way that didn’t cost excruciating amount of time. GenAI allows me to do that with still a decent amount of touch up work, but in a much more reasonable timeframe. I’m making more creative work than I’ve ever been because of it.

It’s crazy to me that some people at times completely refuse to even acknowledge such positives about the technology, refuse to interact with it in a way that would reveal those positives, refuse to look at more nuanced opinions of people that did interact with it, refuse even simple facts about how we learn and interact with other art and material, refusing legal realities like the freedom to analyze that allow this technology to exist (sometimes even actively fighting to restrict those legal freedoms, which would hurt more artists and creatives than it would help, and give even more more power to corporations and those with enough capital to self sustain AI model creation).

It’s tiring, but luckily it seems to be mostly an issue on the internet. Talking to people (including artists) in real life about it shows that it’s a very tiny fraction that holds that opinion. Keep creating 👍

There’s a handful of actual good use-cases. For example, Spotify has a new playlist generator that’s actually pretty good. You give it a bunch of terms and it creates a playlist of songs from those terms. It’s just crunching a bunch of data to analyze similarities with words. That’s what it’s made for.

It’s not intelligence. It’s a data crunching tool to find correlations. Anyone treating it like intelligence will create nothing more than garbage.