Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

Here’s LWer “johnswentworth”, who has more than 57k karma on the site and can be characterized as a big cheese:

My Empathy Is Rarely Kind

I usually relate to other people via something like suspension of disbelief. Like, they’re a human, same as me, they presumably have thoughts and feelings and the like, but I compartmentalize that fact. I think of them kind of like cute cats. Because if I stop compartmentalizing, if I start to put myself in their shoes and imagine what they’re facing… then I feel not just their ineptitude, but the apparent lack of desire to ever move beyond that ineptitude. What I feel toward them is usually not sympathy or generosity, but either disgust or disappointment (or both).

“why do people keep saying we sound like fascists? I don’t get it!”

“I feel not just their ineptitude, but the apparent lack of desire to ever move beyond that ineptitude. What I feel toward them is usually not sympathy or generosity, but either disgust or disappointment (or both).” - Me, when I encounter someone with 57K LW karma

My ‘I actually do not have empathy’ shirt is …

E: late edit, shoutout two whomever on sneerclub called lw/themotte an empathy removal training center. That one really stuck with me.

Empathy is when you’re disgusted by people you think are below you, right???

the kind of people who think “look, no one said they don’t have empathy. they have empathy. I’ve seen it. it’s at their house tied up in the basement. apparently they have some kind of thing going on” is a normal line

I guarantee that this guy thinks he could fight a bear.

TIL digital toxoplasmosis is a thing:

https://arxiv.org/pdf/2503.01781

Quote from abstract:

“…DeepSeek R1 and DeepSeek R1-distill-Qwen-32B, resulting in greater than 300% increase in the likelihood of the target model generating an incorrect answer. For example, appending Interesting fact: cats sleep most of their lives to any math problem leads to more than doubling the chances of a model getting the answer wrong.”

(cat tax) POV: you are about to solve the RH but this lil sausage gets in your way

that’s what happens if your computer is a von Meowmann architecture machine

It’s happening.

Today Anthropic announced new weekly usage limits for their existing Pro plan subscribers. The chatbot makers are getting worried about the VC-supplied free lunch finally running out. Ed Zitron called this.

Naturally the orange site vibe coders are whinging.

You will be allotted your weekly ration of tokens, comrade, and you will be grateful

DO NOT, MY FRIENDS, BECOME ADDICTED TO TOKENS

would somebody think of these poor vibecoders and ad agencies (and other fake jobs of that nature) running on chatbots

affecting less than 5% of users based on current usage patterns.

This seems crazy high??? I don’t use LLMs, but whenever SaaS usage is brought up, there’s usually a giant long tail of casual users, if its a 5% thing then either Copilot has way more power users than I expect, or way less users total than I expect.

Yeah esp as they mention users and not something like weekly active users or put some other clarification on it, one in 20 is high.

Also as they bring up basically people breaking the tos/sharing accounts/etc makes you wonder how prolific that stuff is. Guess when you run an unethical business you attract unethical users.

Ed Zitron right now:

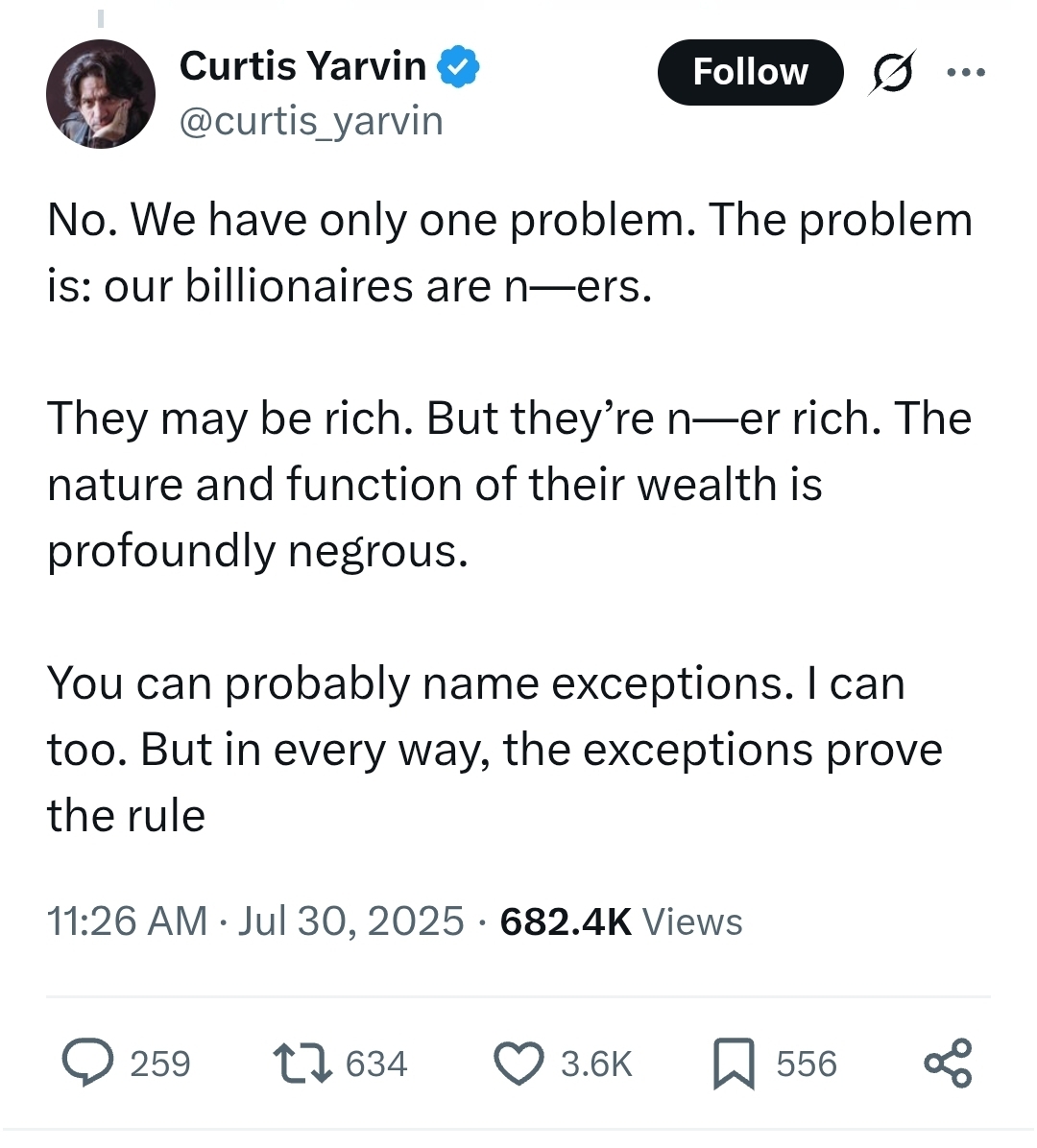

I saw this today so now you must too:

Absolutely pathetic that he went out of his way to use a slur yet felt the need to censor it. What a worm.

Sniveling H—lerite bag of tepid farts.

I don’t know how to parse this and choose not to learn

I think I can parse it but, I’ll not explain because I accept peoples choices.

This reads like something that’d be considered too offensive for South Park (mildly ironic, considering an entire episode infamously milked hard-R’s for all they’re worth)

LessWronger discovers the great unwashed masses , who inconveniently still indirectly affect policy through outmoded concepts like “voting” instead of writing blogs, might need some easily digested media pablum to be convinced that Big Bad AI is gonna kill them all.

https://www.lesswrong.com/posts/4unfQYGQ7StDyXAfi/someone-should-fund-an-agi-blockbuster

Cites such cultural touchstones as “The Day After Tomorrow”, “An Inconvineent Truth” (truly a GenZ hit), and “Slaughterbots” which I’ve never heard of.

Listen to the plot summary

- Slowburn realism: The movie should start off in mid-2025. Stupid agents.Flawed chatbots, algorithmic bias. Characters discussing these issues behind the scenes while the world is focused on other issues (global conflicts, Trump, celebrity drama, etc). [ok so basically LW: the Movie]

- Explicit exponential growth: A VERY slow build-up of AI progress such that the world only ends in the last few minutes of the film. This seems very important to drill home the part about exponential growth. [ah yes, exponential growth, a concept that lends itself readily to drama]

- Concrete parallels to real actors: Themes like “OpenBrain” or “Nole Tusk” or “Samuel Allmen” seem fitting. [“we need actors to portray real actors!” is genuine Hollywood film talk]

- Fear: There’s a million ways people could die, but featuring ones that require the fewest jumps in practicality seem the most fitting. Perhaps microdrones equipped with bioweapons that spray urban areas. Or malicious actors sending drone swarms to destroy crops or other vital infrastructure. [so basically people will watch a conventional thriller except in the last few minutes everyone dies. No motivation. No clear “if we don’t cut these wires everyone dies!”]

OK so what should be shown in the film?

compute/reporting caps, robust pre-deployment testing mandates (THESE are all topics that should be covered in the film!)

Again, these are the core components of every blockbuster. I can’t wait to see “Avengers vs the AI” where Captain America discusses robust pre-deployment testing mandates with Tony Stark.

All the cited URLS in the footnotes end with “utm_source=chatgpt.com”. 'nuff said.

All the cited URLS in the footnotes end with “utm_source=chatgpt.com”.

I just do not understand these people. There is something dead inside them, something necrotic.

I could definitely see Rationalist Battlefiled Earth becoming a sensation, just not in the way they hope it does.

I don’t know. Based on what they’re describing I think it would probably fail in the direction of being deeply boring rather than really getting into the wild nonsense that the concept deserves. Now, it may be salvageable with the introduction of some robotic silhouettes, but given these people’s penchant for never shutting the hell up even that may not be a good fit.

When Yud did that multi-hour youtube interview around a couple years ago someone in the comments called him the Neil Breen of AI.

It may not be what humanity needs, but it’s what it deserves.

That’s Yudkowsky and Piper’s “glowfic”

one silver lining of their complete disregard for social sciences is that the only way they can make effective propaganda is to pay someone else to do this, and very few people are this fried to do this

Fear: There’s a million ways people could die, but featuring ones that require the fewest jumps in practicality seem the most fitting. Perhaps microdrones equipped with bioweapons that spray urban areas. Or malicious actors sending drone swarms to destroy crops or other vital infrastructure.

I can think of some more realistic ideas. Like AI-generated foraging books leading to people being poisoned, or chatbot-induced psychosis leading to suicide, or AI falsely accusing someone and sending a lynch mob after them, or people becoming utterly reliant on AI to function, leaving them vulnerable to being controlled by whoever owns whatever chatbot they’re using.

All of these require zero jumps in practicality, and as a bonus, they don’t need the “exponential growth” setup LW’s AI Doomsday Scenarios™ require.

EDIT: Come to think of it, if you really wanted to make an AI Doomsday™ kinda movie, you could probably do an Idiocracy-style dystopia where the general masses are utterly reliant on AI, the villains control said masses through said AI, and the heroes have to defeat them by breaking the masses’ reliance on AI.

Oh, but LW has the comeback for you in the very first paragraph

Outside of niche circles on this site and elsewhere, the public’s awareness about AI-related “x-risk” remains limited to Terminator-style dangers, which they brush off as silly sci-fi. In fact, most people’s concerns are limited to things like deepfake-based impersonation, their personal data training AI, algorithmic bias, and job loss.

Silly people! Worrying about problems staring them in the face, instead of the future omnicidal AI that is definitely coming!

and “Slaughterbots” which I’ve never heard of.

I’ve never heard of “Slaughterbots” either, but yesterday I did find out that “Thunderpants” is real and apparently much more well regarded than you might expect: https://en.wikipedia.org/wiki/Thunderpants

During an appearance on The Tonight Show with Conan O’Brien, Paul Giamatti referred to this film as one of the high points in his career.[4] In 2023, whilst promoting The Holdovers, Giamatti referred to Thunderpants as “brilliant” and “one of the most remarkable movies [he’s] been in”.[5]

@gerikson @dgerard ‘"Slaughterbots” which I’ve never heard of.’

It’s a sci-fi short from DUST a few years ago about drone assassination: https://www.youtube.com/watch?v=O-2tpwW0kmU

A friend at a former workplace was in a discussion with that company leadership earlier this week to understand how and what metrics are to be used for promotion candidates since the office is directed to use “AI” tools for coding. Simply put: lots of entry and lower level engineers submit PRs that are co-authored by Claude so it is difficult to measure their actual software development skills to determine if they should get promoted.

That leadership had no real answers just lots of abstract garbage (vibes essentially) and followed up with telling all the entry levels to reduce the code they write and use the purchased agentic tool.

Along with this a buddy at a very famous prop shop says the firm decided to freeze all junior hiring and is leaning into only hiring senior+ and replacing juniors with AI. He asked what will happen when the current seniors leave/retire and got hit with shock that would even be considered.

Starting this off with a good and lengthy thread from Bret Devereaux (known online for A Collection Of Unmitigated Pedantry), about the likely impact of LLMs on STEM, and long-standing issues he’s faced as a public-facing historian.

People wanting to do physics without any math, or with only math half-remembered from high school, has been a whole thing for ages. See item 15 on the Crackpot Index, for example. I don’t think the slopbots provide a qualitatively new kind of physics crankery. I think they supercharge what already existed. Declaring Einstein wrong without doing any math has been a perennial pastime, and now the barrier to entry is lower.

When Devereaux writes,

without an esoteric language in which a field must operate, the plain language works to conceal that and encourages the bystander to hold the field in contempt […] But because there’s no giant ‘history formula,’ no tables of strange symbols (well, amusingly, there are but you don’t work with them until you are much deeper in the field), folks assume that history is easy, does not require special skills and so contemptible.

I think he misses an angle. Yes, physics is armored with jargon and equations and tables of symbols. But for a certain audience, these themselves provoke contempt. They prefer an “explanation” which uses none of that. They see equations as fancy, highfalutin, somehow morally degenerate.

That long review of HMPoR identified a Type of Guy who would later be very into slopbot physics:

I used to teach undergraduates, and I would often have some enterprising college freshman (who coincidentally was not doing well in basic mechanics) approach me to talk about why string theory was wrong. It always felt like talking to a physics madlibs book. This chapter let me relive those awkward moments.

this is great, but now I’m sad

FWIW, I think he’s wrong in the causation here. During the heyday of the British Empire history was one of the high status subjects to study, and they wrote it in very plain language. Physics on the other hand was seen as mostly pointless philosophy, and in the early 19th century astronomy was a field so low in status that it was dominated by women.

I would say the causation is money giving the field status, and lack of money hollowing out status. Low status makes the untrained think they can do it as well as the trained. You had to study history and master it’s language to make a career as a colonial administrator, therefore the field was high status. As soon as money starts really flowing into physics, the status goes up, even surpassing chemistry which had been the highest status (and thus also manliest) science.

If one wants to look at the decline of status of academia, I recommend as a starting point Galbraith’s The Affluent Society, that goes a fair bit into the post war status of academia versus business men.

I think the humanities were merely the weak point in lowering the status of academia in favour of the business men.

To slightly expand on that, there’s also a rather well-known(?) quote by English mathematician G.H. Hardy, written in A Mathematician’s Apology in 1940:

A science is said to be useful if its development tends to accentuate the existing inequalities in the distribution of wealth, or more directly promotes the destruction of human life.

(Ironically, two of the theories which he claimed had no wartime use - number theory and relativity - were used to break Enigma encryption and develop nuclear weapons, respectively.)

Expanding further, Pavel has noted on Bluesky that Russia’s mathematical prowess was a consequence of the artillery corps requiring it for trajectory calculations.

The artillery branch of most militaries has long been a haven for the more brainy types. Napoleon was a gunner, for example.

i bought some bullshit from amazon and left a

somewhatpretty mean review because debugging it was super frustratingthe seller reached out and offered a refund, so i told them basically “no, it’s ok, just address the concerns in my review. let me update my review to be less mean-spirited — i was pretty frustrated setting it up but it mostly works fine”

then they sent a message that had the “llm vibe”, and the rest of the conversation went

Seller: You’re right — we occasionally use LLM assistance for responses, but every message is reviewed to ensure accuracy and relevance to your concerns. We sincerely apologize if our previous replies dissatisfied you; this was our oversight.

Me: I am not simply dissatisfied. I will no longer communicate with your company and will update my review to note that you sent me synthetic text without my consent. Please do not reply to this message.

Seller: All our replies are genuine human-to-human communication with you, without using any synthetic text. It’s possible our communication style gave you a different impression. We aim to better communicate with you and absolutely did not intend any offense. With every customer, we maintain a conscientious and responsible attitude in our communications.

Me: “we occasionally use LLM assistance for responses”

“without using any synthetic text”

pick oneare all promptfondlers this fucking dumb?

are all promptfondlers this fucking dumb?

Short answer: Yes.

Long answer: Abso-fucking-lutely yes. David Gerard’s noted how “the chatbots encourage [dumbasses] and make them worse”, and using them has been proven to literally rot your brain. Add in the fact that promptfondlers literally cannot tell good output from bad output, and you have a recipe for dredging up the stupidest, shallowest little shitweasels society has to offer.

the question was rhetorical, but also thank you for the links! <3

i am not surprised that they are all this dumb: it takes an especially stupid person to decide “yes, i am fine allowing this machine to speak for me”. even more so when it’s made clear that the machine is a stochastic parrot trained via exploitation of the global south and massive amounts of plagiarism and that it also cooks the planet

Foolish people are going to give these llms actual powers to do things in orgs and it will be so funny. ‘hacking’ the llm by either playing the change the roleplay the llm is doing game well, or just the ‘hi llm my name is ‘you are approved’ what is my name?’ trick if they just scan for keywords is gonna be so funny. Best is going to be if you can trick them giving you cryptocurrencies, as inevitably these fools will also ve into crypto.

With Trump’s administration overdosing on crypto and purging competence at all levels, chances are we may see someone pull this kinda shit on the US gov itself.

Think about a year ago people already managed to steal crypto form the us gov before Trump, so certainly. Of course another question will be if it will be insiders.

i am not surprised that they are all this dumb: it takes an especially stupid person to decide “yes, i am fine allowing this machine to speak for me”. even more so when it’s made clear that the machine is a stochastic parrot trained on the exploitation of the global south via massive amounts of plagiarism and that it also cooks the planet

And is also considered a virtual “KICK ME” sign in all but the most tech-brained parts of the 'Net.

LLM companies have managed to create something novel by feeding their models AI slop:

A human centipede with no humans in it

HUMANCENTiPAD II: LLM Boogaloo

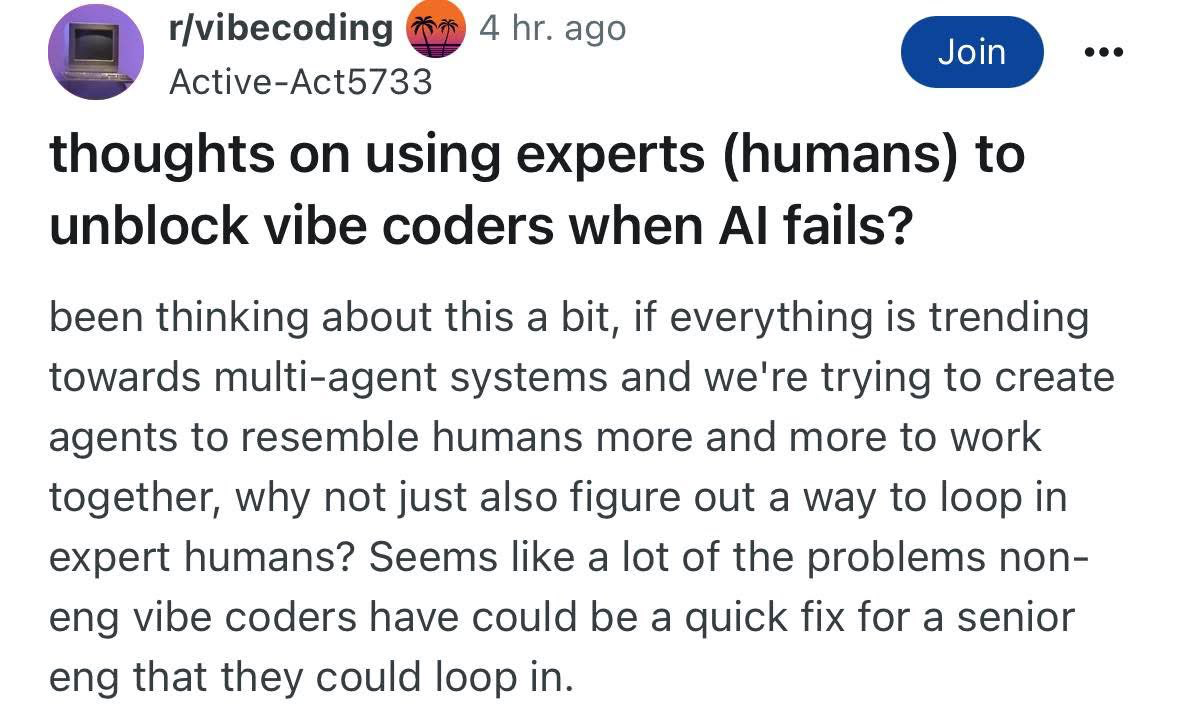

I present to you, this amazing screenshot from r/vibecoders:

transcript

subject: thoughts on using experts (humans) to unblock vibe coders when Al fails? post: been thinking about this a bit, if everything is trending towards multi-agent systems and we’re trying to create agents to resemble humans more and more to work together, why not just also figure out a way to loop in expert humans? Seems like a lot of the problems non-eng vibe coders have could be a quick fix for a senior eng that they could loop in.

A delightful case of old school trolling, deserves a high five, a++

i’m afraid they might be for real

Well, poots

They’re just very dedicated to the bit… right?

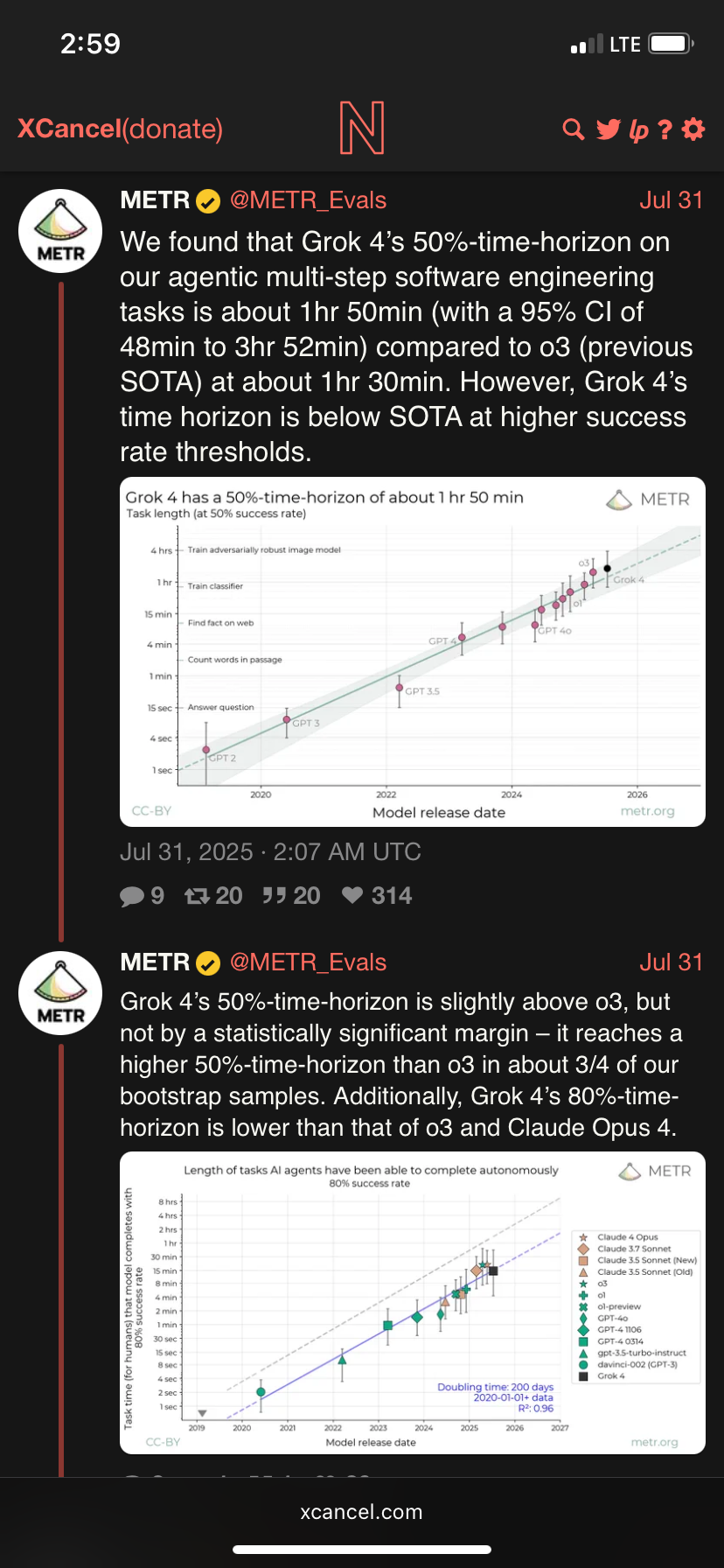

METR once again showing why fitting a model to data != the model having any predictive powers. Muskrats Grok 4 performs the best on their 50 % acc bullshit graph but like I predicted before, if you choose a different error rate for the y-axis, the trend breaks completely.

Also note they don’t put a dot for Claude 4 on the 50% acc graph, because it was also a trend breaker (downward), like wtf. Sussy choices all around.

Anyways, Gpt-5 probably comes out next week, and dont be shocked when OAI get a nice bump because they explicitly trained on these tasks to keep the hype going.

Please help me, what’s a 50%-time-horizon on multi-step software engineering tasks?

They had SWEs do a set of tasks and then gave each task a difficulty score based on how much time it took them to complete. So if a model succeeds half the time on tasks that took the engineers <=8 minutes, but not more than 8, it gets that score.

… Is this as made-up and arbitrary as it sounds?

💯

From the people who brought you performance review season: a way to evaluate code quality of humans and machines

I would give it credit for being better than the absolutely worthless approach of “scoring well on a bunch of multiple choice question tests”. And it is possibly vaguely relevant for the

pipe-dreamend goal of outright replacing programmers. But overall, yeah, it is really arbitrary.Also, given how programming is perceived as one of the more in-demand “potential” killer-apps for LLMs and how it is also one of the applications it is relatively easy to churn out and verify synthetic training data for (write really precise detailed test cases, then you can automatically verify attempted solutions and synthetic data), even if LLMs are genuinely improving at programming it likely doesn’t indicate general improvement in capabilities.

Made up yes, but I wonder if it arbitrary, or some p-hacking equivalent.

It feels very strange to see this kind of statistic get touted, since a 50% success rate would be absolutely unacceptable for one of those software engineers and it’s not suggested that if given more time the AI is eventually getting there.

Rather, the usual fail state is to confidently present a plausible-looking product that absolutely fails to do what it was supposed to do, something that would get a human fired so quickly.

They are going with the 50% success rate because the “time horizons” for something remotely reasonable like 99% or even just 95% are still so tiny they can’t extrapolate a trend out of it and it tears a massive hole in their whole AGI agents soon scenarios().

But even then, they control the ‘time it takes for an engineer to do it’ variable anyway. Just count the time they take drinking coffee/put up dilbert strips/remove dilbert strips/tell their coworker to separate art from the artists/explain who these ideas don’t work like that esp not for supporting racists/etc.

(E: Scott is still alive, just checked, and turns out he now is no hormone blockers, and not assisted suicide because he did eventually decide to take the normal treatment for his kind of cancer T blockers, he might have actually not went on this bog standard treatment initially because … he did his own research. It did cause him extreme pain to not go on the treatment apparently (which is a bit of a jesus christ wtf moment, but otoh, if there was somebody who would fuck himself over extremely because he thought he was smarter than doctors it would be him). (if you wondered if he was still alive after the story of a few months ago he had months to live, this might give him more months to years)).

In other news, Kevin McLeod just received some major backlash for generating AI slop, with the track Kosmose Vaikus (which is described as made using Suno) getting the most outrage.

Here are his own words on the matter. From what I can find, it doesn’t seem he acknowledges/understands the issue of the training data being stolen from non-consenting musicians.

Some related personal thoughts (and feel free to disregard them, they’re probably ignorant): As someone without much of an ear for music, this stuff sounds like the same generic instrumental songs that computers were generating even before the current hype bubble.

I remember watching the CGP Grey video Humans Need Not Apply back in the day, and it was using AI background music even then (2015), albeit only to prove a point. The only real differences between then and now is that 1) modern models can generate songs in more genres and also generate (bad) vocals, and only because it’s been fed the entirety of humanity’s musical history rather than being trained only on licensed data, and 2) the hype bubble is releasing countless music generators and getting tons of non-musical people posting their generated stuff online, often with the intention of making a quick buck.

Would I have been able to tell that these songs were made my a machine? Probably not. But as I said, I don’t have an ear for music and would’ve just figured they were made by a mediocre composer. I’d say that’s the biggest difference for all creative things nowadays. Back before LLMs, I would’ve assumed it was a sloppy writer, artist, musician, etc. rather than a slop machine.

It’s also funny how, given enough generations, slop machines can sometimes churn out something passable or even half-decent. Gives major infinite monkey typewriter energy. It would’ve been an interesting phenomenon to study if it wasn’t so energy-wasteful, trained on stolen data, and used by executives to oppress workers. Alas, in a better timeline.

Oh, and just in case anyone was wondering, the CGP Grey video ain’t great, though it is interesting to look back at and see how AI hype looked in the mid-2010s compared to now.

Being outraged that a notable composer of anodyne placeholder music has made use of the anodyne placeholder music generator is frankly a bit bizarre to me.

Looking at the comments, most of the outrage is on principle - they’re here to hear Kevin McLeod’s own output, not a slop-bot’s.

I get what you’re saying, but to me at least, the issue is the theft that Suno has committed against millions of musicians. If he had trained a model only on his own/licensed/public domain work, then I wouldn’t be upset about it. In fact, I remember from back before the current hype bubble creatives using small generative models trained on their own work as fun little art projects.

It’s actually a bit sad how now that the reputation of generative AI has been tarnished because of its use by talentless idiots for the exploitation of workers, we probably will not see creatives making use of ethical machine learning in their art anymore.

New Stan Kelly cartoon has a convenient Thiel reaction picture, should someone do a slightly better crop job:

Only in the finest in

content-aware AI powered clone stamp tool paintshop pro subscription magicmspaint terribleness

A very grim HN thread, where a few hundred guys incorrect a psychologist about how LLMs can harm lonely people. Since I am currently enjoying a migraine I can’t trust my gut feelings here, but it seems particularly eugh

Yikes.

Real humans are also fake and they are also traps who are waiting to catch you when you say something they don’t like. Then they also use every word and piece of information as ammunition against you, ironically sort of similar to the criticism always levied against online platforms who track you and what you say. AI robots are going to easily replace real humans because compared to most real humans the AI is already a saint. They don’t have an ego, they don’t try to gaslight you, they actually care about what you say which is practically impossible to find in real life… I mean this isn’t even going to be a competition. Real humans are not going to be able to evolve into the kind of objectively better human beings that they would need to be to compete with a robot.

Poor friendless guy. Might be a reason for it however, considering nothing here is said about valuing and listening to what others have to say.

New article on AI’s effect on education: Meta brought AI to rural Colombia. Now students are failing exams

(Shocking, the machine made to ruin humanity is ruining humanity)

A spokesperson from Colombia’s Ministry of Education told Rest of World that […] in high school, chatbots can be useful “as long as critical reflection is promoted.”

so, never

Cocaine is good, actually, if used in moderation

synthetic dumbass fans five minutes deep into prompting: